Mind the gap between testing and production: applying process mining to test the resilience of exchange platforms

Being highly sophisticated by design, exchange platforms require continuous testing to ensure their resilience. Defects can slip through the gaps in test coverage. Thus, identifying and closing such gaps are of the utmost priority. Various techniques are available to support this process.

One of them is called process mining. It is a family of business analysis techniques used to extract information about distributed systems from their execution logs and network captures, which are in no short supply when it comes to complex technology platforms processing huge amounts of inward, outward and internal messages daily.

To illustrate how the process mining approach can be used to enhance functional validation, let's consider the simplest example of a trade order lifecycle.

In general, an order lifecycle comprises several stages. After a participant establishes the connection and sends an order, the system validates the request, and, based on this, places or does not place the order into the order book for further activities. The system assigns an order status tag to this request at every step of its lifecycle, with each order status change communicated to the participant via execution report messages. Even for this simple example, there are numerous potential execution scenarios involving different sequences of order status changes.

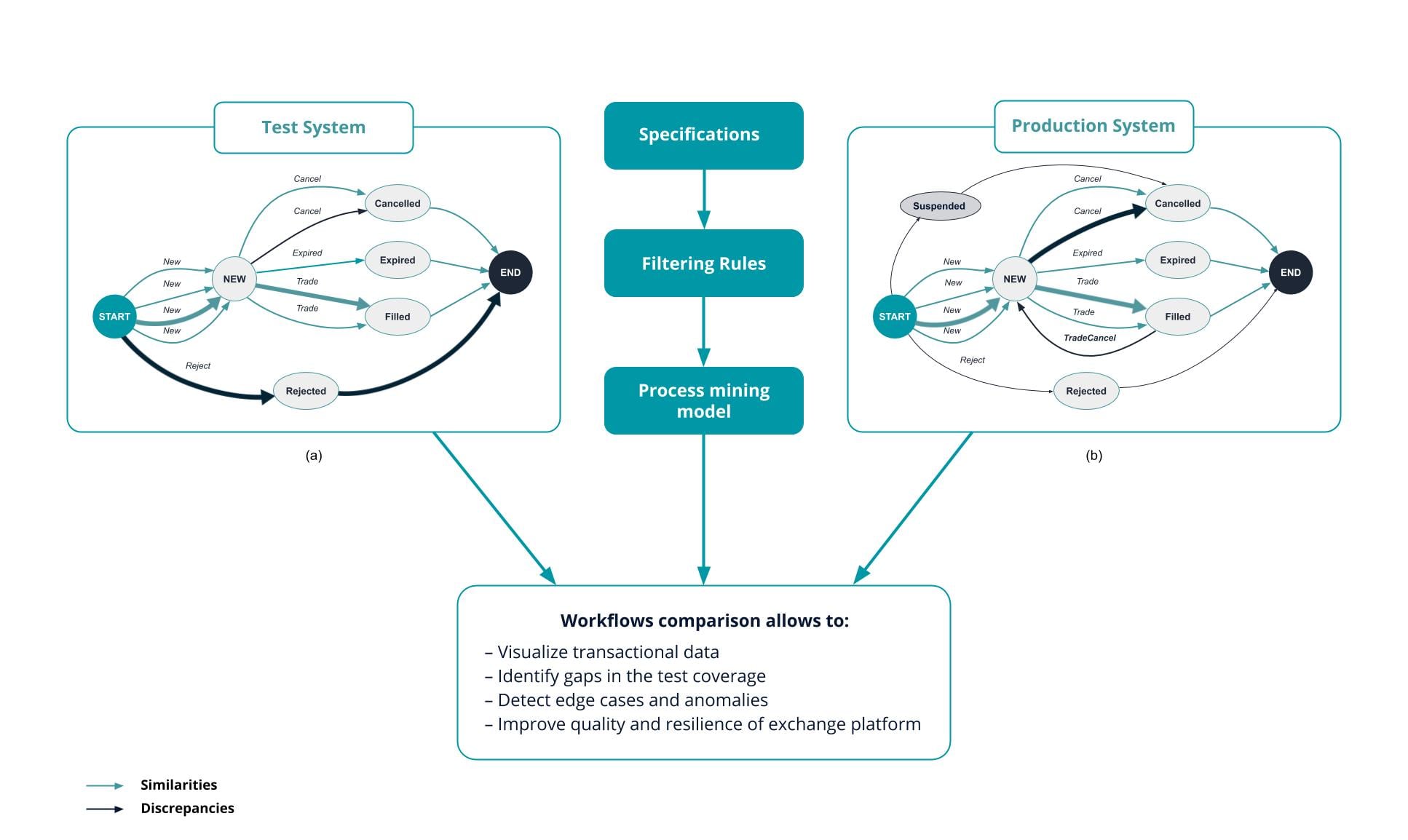

A graph representation of these changes allows to assess test coverage in a human-readable format (see the diagram). Having visualised test execution data, one can discover that, for example, the transactions extracted from the test environment lack Suspended order statuses and TradeCancel state transitions (a). In contrast, similar visualisation based on the production transactions dataset will show that there are Suspended order statuses and TradeCancel transitions present and there are much fewer Reject order statuses and more Cancel transitions (b).

As demonstrated in the diagram, the process mining approach involves several steps. First, the transactional data is captured from the systems under test or production environment. This data includes test execution results, application process logs and network captures. Then, the data is combined into a single database and transformed into a set of flat files which are subsequently used to build a process mining model. Additionally, system specifications and rules filtering can be used to simplify the model.

With process mining techniques in place, the tools can compare both the test and production graph representations and highlight the discrepancies, which allows QA engineers, subject matter experts and compliance analysts to identify the functional areas requiring more extensive test coverage, thus helping to enhance test libraries. Another benefit of using this approach is that functional and non-functional testing libraries can be compared against each other in order to understand whether they cover the same transitions.

Test coverage analysis is a crucial part of proving the resilience of mission-critical platforms. The described approach allows the visualisation of transactional data to obtain a direct representation of coverage and, ultimately, close the testing gaps to assure the orderly functioning of the global financial markets.

This is an advertorial from Exactpro Systems.